Abstract

Recent advances in deep monocular visual SLAM have achieved impressive accuracy and dense reconstruction capabilities, yet their robustness to scale inconsistency in large-

scale indoor environments remains largely unexplored. Existing benchmarks are limited to room-scale or structurally simple settings, leaving critical issues of intra-session scale drift and inter-session scale ambiguity insufficiently addressed.

To fill this gap, we introduce the ScaleMaster Dataset, the first benchmark explicitly designed to evaluate scale consistency under challenging scenarios such as multi-floor structures, long trajectories,

repetitive views, and low-texture regions. We systematically analyze the vulnerability of state-of-the-art deep monocular visual SLAM systems to scale inconsistency, providing both

qualitative and quantitative evaluations. Crucially, our analysis extends beyond traditional trajectory metrics to include a direct map-to-map quality assessment using metrics like Chamfer distance against high-fidelity 3D ground truth.

Our results reveal that while these traditional methods demonstrate strong performance on existing benchmarks, they suffer from severe

scale-related failures in realistic, large-scale indoor environments. By releasing the ScaleMaster dataset and baseline results, we aim to establish a foundation for future research toward developing scale-consistent and reliable visual SLAM systems.

Dataset Statistics

25

Sequences

3.8 km+

Total Length

10+

Environments

RGB+D+IMU

Data Type

Baseline SE(3) Pose Refinement

Raw ARKit VIO poses accumulate drift over long trajectories. We correct this by manually identifying loop closures and optimizing the pose graph to produce drift-corrected ground-truth poses.

Pipeline Overview

- ARKit VIO odometry — Raw 6-DoF poses captured from Apple ARKit; drift accumulates over large-scale trajectories.

- HLoc retrieval — NetVLAD selects loop closure candidates; SuperPoint + LightGlue verifies geometric consistency via Essential-matrix.

- Manual verification — Each candidate is accepted or rejected interactively, ensuring only reliable loop pairs are used.

- SE(3) relative pose estimation — Depth Anything V3 provides metric depth to compute the 6-DoF transform between accepted loop pairs.

- GTSAM PGO — Pose graph optimization jointly minimizes odometry and loop closure residuals, yielding drift-corrected trajectories.

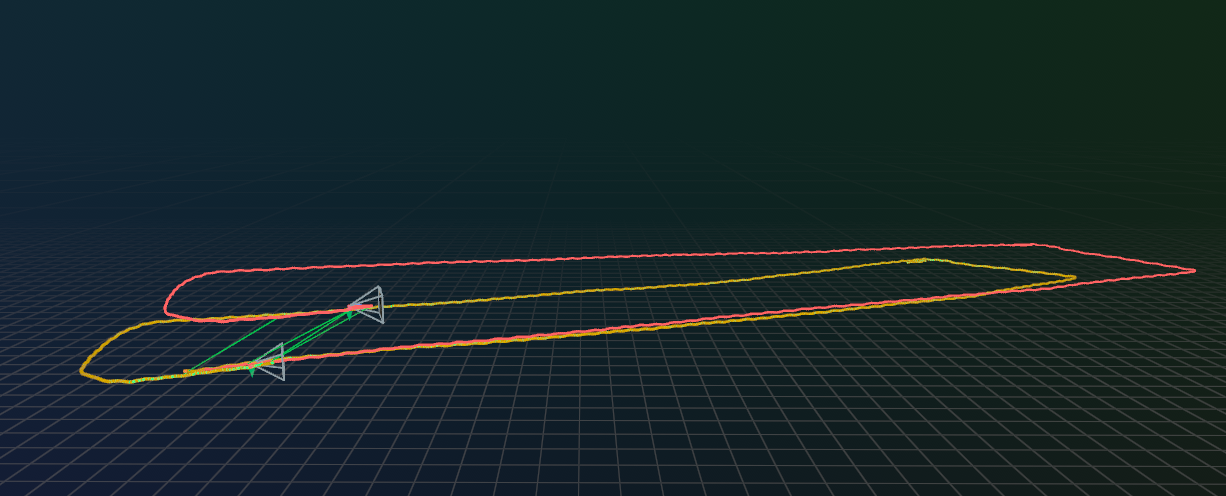

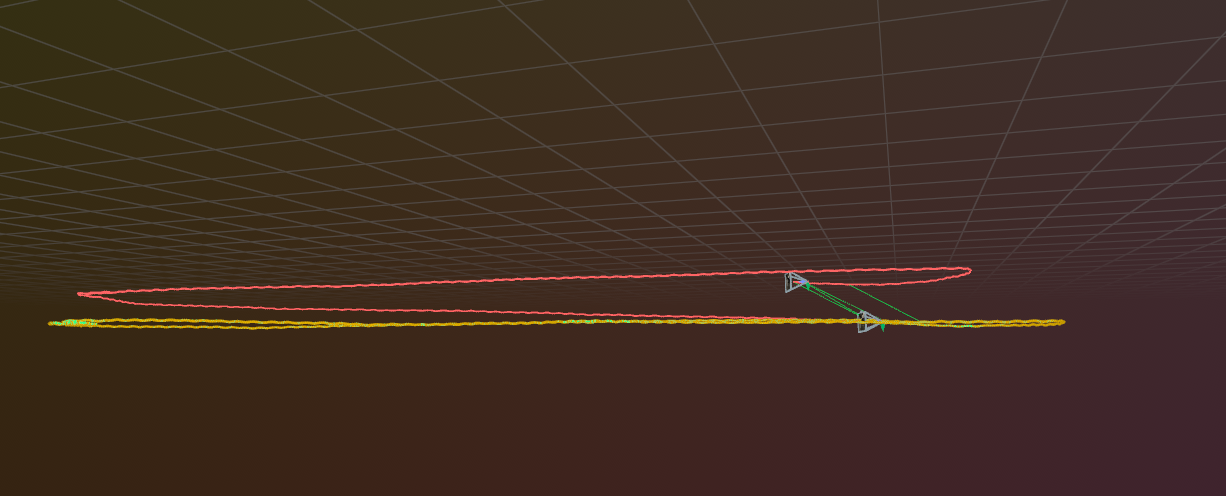

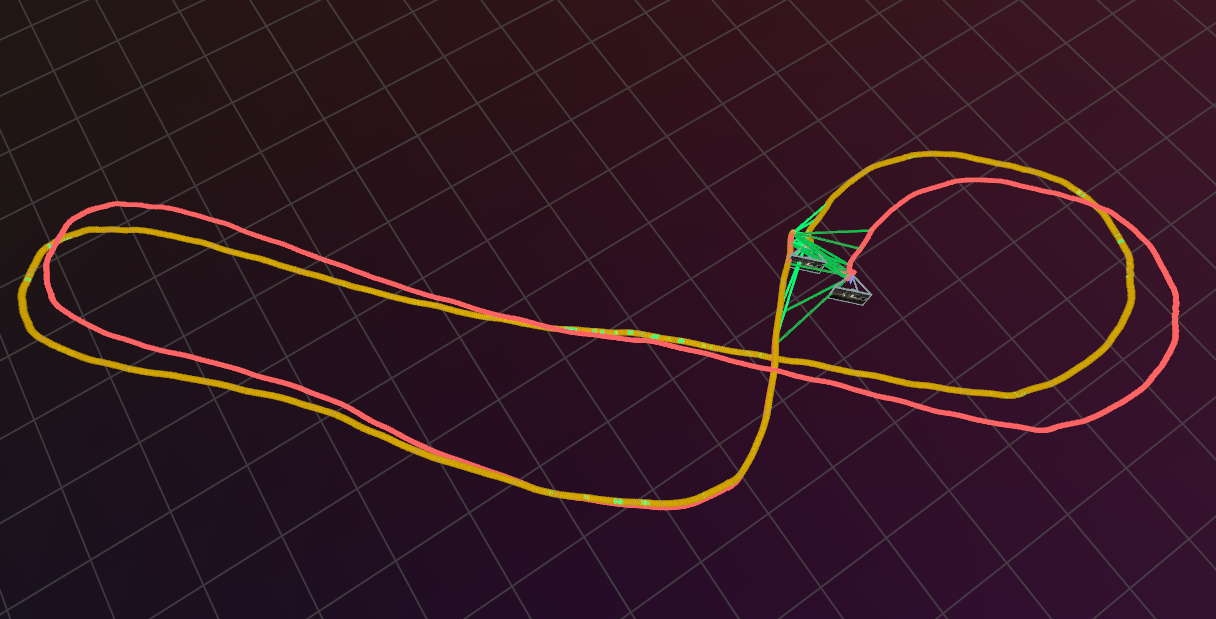

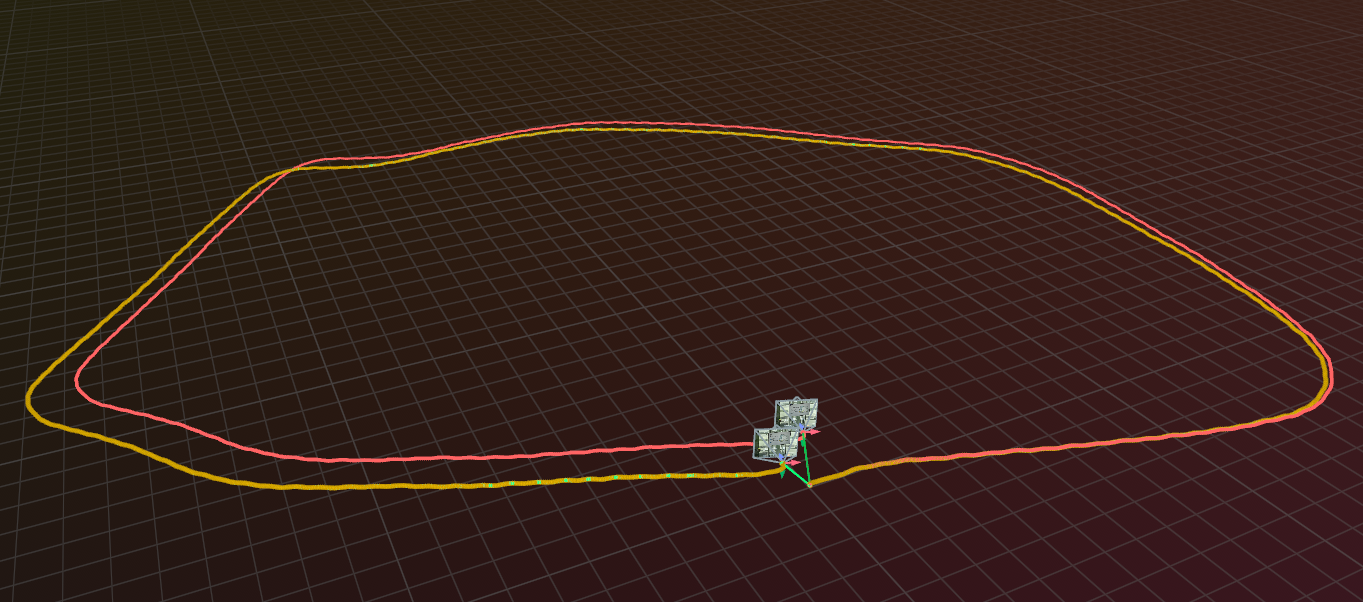

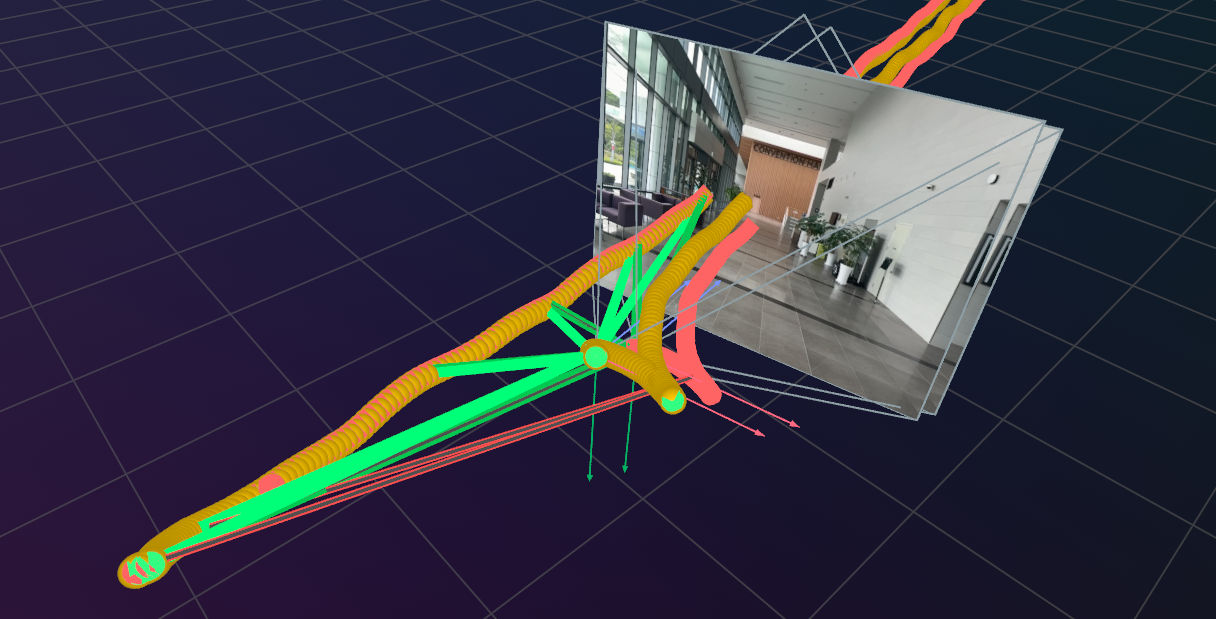

Before / After Pose Graph Optimization

Red = trajectory before PGO | Yellow = trajectory after PGO | Green = verified loop closures

Rerun Visualization

3D trajectory with camera frustums, loop closure edges, and RGB images in Rerun.

Demo

Dataset Sequences

Click on any sequence to explore in 3D

📚 Library Environments (9 sequences)

Library 01

Library 02

Library 03

Library 04

Library 05

Library 06

Library 07

Library 08

Library 09

🏢 Large Hall Environments (5 sequences)

LargeHall 01

LargeHall 02

LargeHall 03

LargeHall 04

LargeHall 05

🚗 Parking & Basement (3 sequences)

Parking 01

Parking 02

Basement 01

🪜 Stairs & Station (3 sequences)

Stairs 01

Stairs 02

Station 01

🏠 Indoor Rooms (5 sequences)

HotelRoom 01

Lab 01

Office 01

Lobby 01

Lounge 01

Download Dataset

Citation

@inproceedings{ju2026scalemaster,

title={Have We Mastered Scale in Deep Monocular Visual SLAM? The ScaleMaster Dataset and Benchmark},

author={Ju, Hyoseok and Suh, Bokeon and Kim, Giseop},

booktitle={Proceedings of the IEEE International Conference on Robotics and Automation (ICRA)},

year={2026},

note={To appear}

}